Autonomous (driverless) cars, customer support chatbots, Alexa and Netflix suggestions are a few examples of AI that have already changed our lives.

AI of course stands for Artificial Intelligence! But what does it really mean? To understand this, we should start with a simpler question: “What is an algorithm?”

The first algorithm is dated back to 2500 BC to the ancient Babylonian mathematicians, thus moving on to the Egyptians, Greeks, and Arabs. It is about taking an input, applying a set of rules, and generating an output.

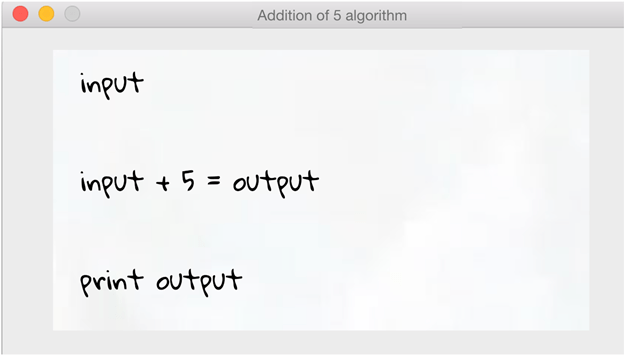

Let’s have a look at a simple example of an algorithm:

Here, the computer will ask for an “input” number from the user, will add 5 to that number and then will print the “output” number. This is my algorithm! As simple as that!

But, as I could write this article in English, French, Spanish or even Greek, the same applies to my algorithm. There are over 9,000 programming languages in the world (more than the actual officially spoken languages) that this algorithm could be written in (including Python, C#, R and Java).

The same algorithm can look wildly different depending on the choice of programming language, but still generate the same output. That choice depends on the type of algorithm, the programmer’s experience with the language, its speed, and its user-friendliness.

If an algorithm takes some input, uses logic and mathematics, and then produces an output, AI takes this a step further – it uses a combination of inputs and outputs simultaneously to “learn”/ “understand” the data and produce outputs/conclusions when given new inputs. In the context of a “plus 5” algorithm, AI can’t add much value, but where there’s a range of complex, nuanced inputs, AI can provide meaningful outputs in a faster, more scalable way than might be possible for humans.

AI or ML?

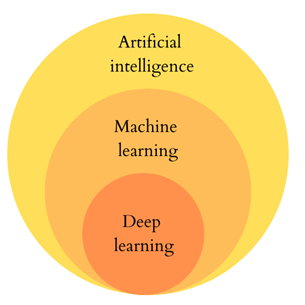

The terms AI and machine learning (ML) are often used interchangeably. Although there are many schools of thought on defining these terms, the most common one (and in my view the most sensible) is:

- AI is the broader term which covers any type of program that can sense, reason, act and adapt.

- ML is a subset of AI which is based on algorithms whose performance improve as they are exposed to more data over time.

- Deep Learning (DL) is a subset of ML in which artificial neural networks adapt and learn from vast amounts of data.

But let’s try and understand this using a more tangible and simpler example.

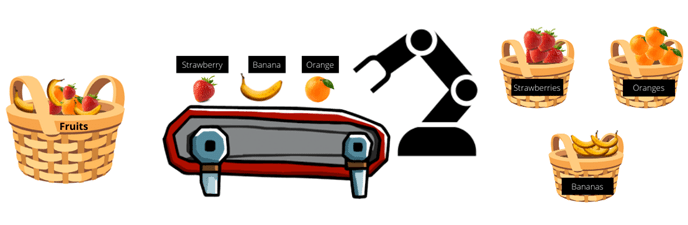

Imagine you are the owner of a fruit shop (which sells bananas, strawberries, and oranges) and you want to split your fruits in 3 baskets based on their type. So, by getting some help from your friends, you label each fruit and then place them on the sorting table where the robot will scan each fruit’s label and based on its type will place it in the respective basket.

This is an example of AI without ML, as the fruits are labelled (or “hard-coded”) by humans and the scanning system just reads their label. This rule-based approach is time-consuming and requires lots of input and labelling from humans to work.

So, as an innovative fruit shop keeper, you decide to add some Machine Learning (ML) to your sorting system.

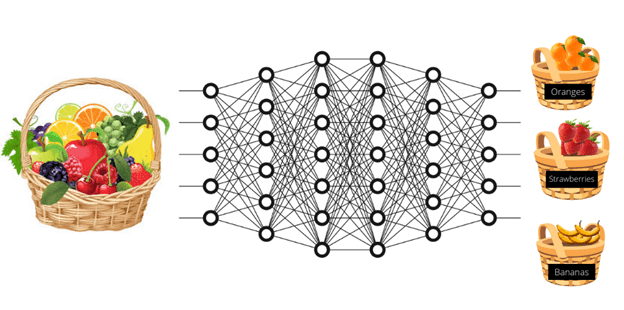

You choose some strawberries, bananas, and oranges, label them accordingly and feed them into the system.

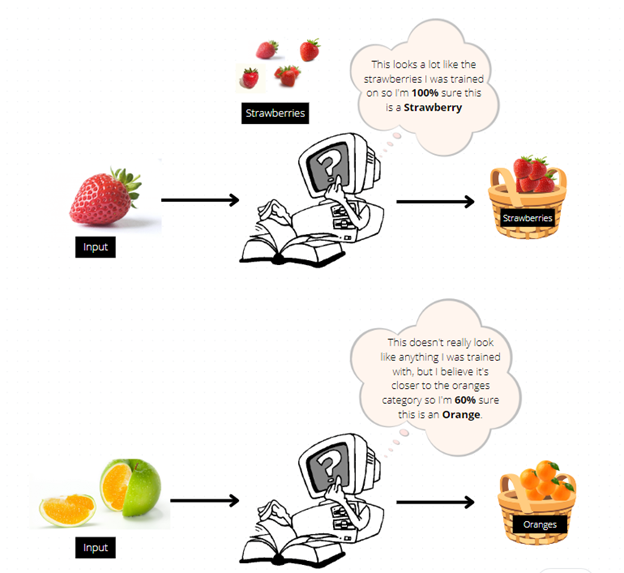

Your computer will learn the features of each fruit (i.e., that strawberries are red, bananas are long and yellow, and oranges are, well, orange) and when given new fruits it will already be trained to categorize them correctly.

The computer will assess all new fruits based on its learning, and with a degree of confidence will categorise each fruit – in other words, how certain the system is when categorising inputs in this way.

This is an example of Supervised Learning (an ML type), where the system is supervised by providing labels to fruits and therefore the computer can identify any new fruits based on these labels. There are more categories of machine learning, which we’ll explore in a subsequent article.

Finally, as a fruit shop owner, you decide to grow your business further and import more fruits. These fruits need to be categorized again but using the ML methods you have previously used will take you a lot of time to label the fruits and the degree of confidence will likely decrease. You, therefore, decide to use the new and exciting science of Deep Learning.

In an essence, deep learning is mimicking the way human brains work and learn. Its main advantage is that the fruits are just fed into the system, without any need for labelling or their features classified. It lets the algorithms do that using multiple network layers.

By providing the system with lots of fruit images, it will automatically build up a pattern of what each fruit looks like using the different layers of neural networks. Each layer defines different fruit features, like their colour, shape, and size. Following this feature extraction and classification of fruits, the deep learning model will then categorize fruits based on their statistical similarity. It doesn’t need to be told what a kiwifruit is, it just works out that small, oval, fuzzy, brown fruits are likely all the same.

Deep learning is a relatively new branch of AI (although initially developed in the 1960s), since it requires a huge amount of processing power and data to attempt to replicate human cognitive processes. Deep learning is gradually embedded in many AI processes, including speech recognition, natural language processing and translation.

Summary

In this article, AI, ML and DL have been briefly defined and their differences, although still ambiguous, explained as interdependent subsets of each other.

AI – along with ML and DL – will transform industries created by humans and will create a plethora of new ones. In this new age, algorithms and networks will run the world and we must keep up.

AI can!

Can you?